February 04, 2026 • 10 min read

February 04, 2026 • 10 min read

Your design team just quit.

Well, not literally—but they might as well have. Three designers, tasked with producing 15-20 ad creatives every week, are running on fumes. The feedback loop is chaos: "We need this by tomorrow." "Can you make 5 variations?" "Change the hook again—it's not working."

Meanwhile, you're spending $20 on a creative, seeing no conversions, and killing it. Then spending another $25 on a different concept. Same result. Your testing budget is bleeding, your team is exhausted, and you still don't know what works.

Here's the truth: High-volume creative testing doesn't require more designers. It requires a better system.

Most teams fail because they're solving the wrong problem. They think they need to produce more creatives faster. But the real problem? They don't know which creatives to produce, how to test them properly, or when to kill them.

Here's what happens: Your designer creates 5 completely different concepts. You upload all 5 simultaneously. Meta picks a favorite within 2 hours (usually randomly). That creative gets 80% of your $100 daily budget. The other 4 get $5 each—not enough for meaningful data. After 3 days, nothing seems to work, so you kill everything and start over.

Result? Burned-out team, wasted budget, zero learnings.

The solution is systematic testing with controlled variables.

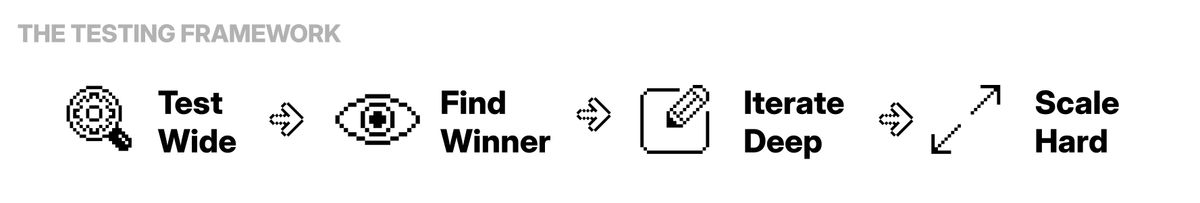

The Testing Framework

Objective: Find one winning concept out of 10-15 variations

What to test: Completely different hooks and angles

Structure: 1 campaign, 1 broad ad set (25-65, broad targeting), upload 2-3 new ads daily

Budget: $10-15 per creative minimum (15 creatives = $150-225 daily)

Kill rules:

Winner signals: CTR >2%, CPC <50% of average, 25%+ video completion, 3+ conversions at target CPA

Objective: Identify the 1-2 concepts that actually convert profitably

By day 7: 10-15 tested → 2-3 with promising metrics → 1-2 with actual conversions at acceptable CPA

Critical question: Which has conversions, not just engagement? High CTR with $150 CPA loses to lower CTR with $40 CPA.

Action: Kill everything except 1-2 creatives with best CPA and >5 conversions.

Objective: Create 10-15 variations of your winning concept

What to test (one variable at a time):

Key: Test ONE element at a time. Change multiple variables = can't identify what drove performance.

Expected outcome: 2-3 variations outperform original

Objective: Scale proven winners to maximum profitable spend

Once you have 2-3 creatives delivering target CPA with 15-50+ conversions:

Most advertisers make creative decisions with statistically insignificant data.

Common scenario:

Problem: Neither conclusion is valid. Sample size too small.

At $50 AOV: Minimum $100-150 spend per creative, 5-10 conversions before judgment

At $100+ AOV: Minimum $150-250 spend per creative, 3-5 conversions minimum

The math: If your target CPA is $40:

To properly test 15 creatives, you need $1,500-2,250 in testing budget.

Can't afford that? Test fewer creatives properly, not more improperly.

The problem: Meta's algorithm picks a favorite in the first 2 hours and starves everything else.

What happens: You upload 5 ads → Creative #3 gets 3 quick clicks → Meta allocates 80% of budget to #3 → Other 4 get $10 total over 3 days → Creative #3 has terrible CPA but got all the spend.

Instead of: Uploading 5 ads simultaneously on Monday

Do this: Upload 2 ads Monday, 2 ads Wednesday, 1 ad Friday

Why this works: Each ad gets initial "discovery phase" without competing. Algorithm tests each individually. You gather cleaner data on true performance.

If using CBO and algorithm keeps starving ads, set minimum spend per ad ($15-20/day) in campaign settings. Forces Meta to fund each creative minimally. Works better at higher daily budgets ($200+/day).

Your design team doesn't need to work harder. They need to work smarter.

Monday: Research & Planning (2 hours)

Tuesday-Thursday: Production Days (3-4 hours per day)

Batch approach: Create 5-7 variations of the same hook type per day

Friday: Upload & Setup (1 hour)

Total design time: 12-15 hours per week for 15-20 creatives

Key insight: Batch production of similar concepts is 3x faster than creating random different concepts. A designer can create 5 variations of the same hook in 90 minutes vs. 5 completely different concepts in 6 hours.

For teams that need higher volume (20+ creatives/week) or want to reduce production time from 12-15 hours to 3-5 hours:

Monday: Research (2 hours)

Tuesday: Generate Variations (1 hour)

Wednesday-Thursday: Review & Select (2 hours)

Friday: Upload (30 minutes)

Total design time: 3-5 hours per week for 15-20+ creatives

When this makes sense:

The hybrid approach: Many teams use both methods—manual batch production for original concepts, AI generation for scaling variations of winners.

Single Testing Campaign Architecture:

Campaign: Creative Testing Lab

Ad Set: Broad Testing

Ads: 15-20 active creatives

Why this works: One campaign = consistent learning, broad targeting = tests creative strength, ad-level kills = remove losers without disrupting winners.

Learning phase note: Matters for scaling, NOT testing. When testing, you want disruption. Learning phase is relevant when you have winners and want to scale them in a separate campaign.

Formula: Daily budget = $10-15 × number of active creatives

Examples:

Can't afford this? Test fewer creatives properly.

Better: 5 creatives × $150 each = 5 valid tests Worse: 15 creatives × $50 each = 15 inconclusive tests

If you're running $10-20/day total budget: You cannot properly test 15-20 creatives per week. The math doesn't work.

Adapted strategy for small budgets:

Test 2-3 creatives per week, not 15-20:

Monthly capacity at $20/day: 8-12 creatives properly tested, 2-3 winners identified. Better than 40 creatives improperly tested with no learnings.

The principle: Depth over breadth at small budgets.

Kill immediately if:

Kill after 5-7 days if:

Keep testing if:

Scale immediately if:

Realistic expectations for 15-20 creatives/week testing:

This is sustainable high-volume testing: Not hoping all 15 work, but systematically finding the 2-3 that do through volume. Proven winners fatigue after 4-8 weeks, but continuous testing provides replacements.

Testing 15-20 creatives per week doesn't burn out your design team when you:

The teams that win produce systematic variations of proven concepts, test properly, and scale winners ruthlessly. Your design team doesn't need 80-hour weeks. They need a system that tells them exactly what to create, when, and why.

High-volume creative testing in 2026 isn't about brute force—it's about systematic iteration. Test concepts broadly, find winners through proper statistical significance, iterate deeply on what works, and scale hard. That's how 15-20 creatives per week becomes sustainable instead of exhausting.

Get notified when new insights, case studies, and trends go live — no clutter, just creativity.

Table of Contents

Arpita Mahato

Content Writer, Vibemyad

Arpita Mahato

Content Writer, Vibemyad

Rahul Mondal

Product, Design and Co-founder, Vibemyad