May 04, 2026 • 11 min read

May 04, 2026 • 11 min read

TL;DR

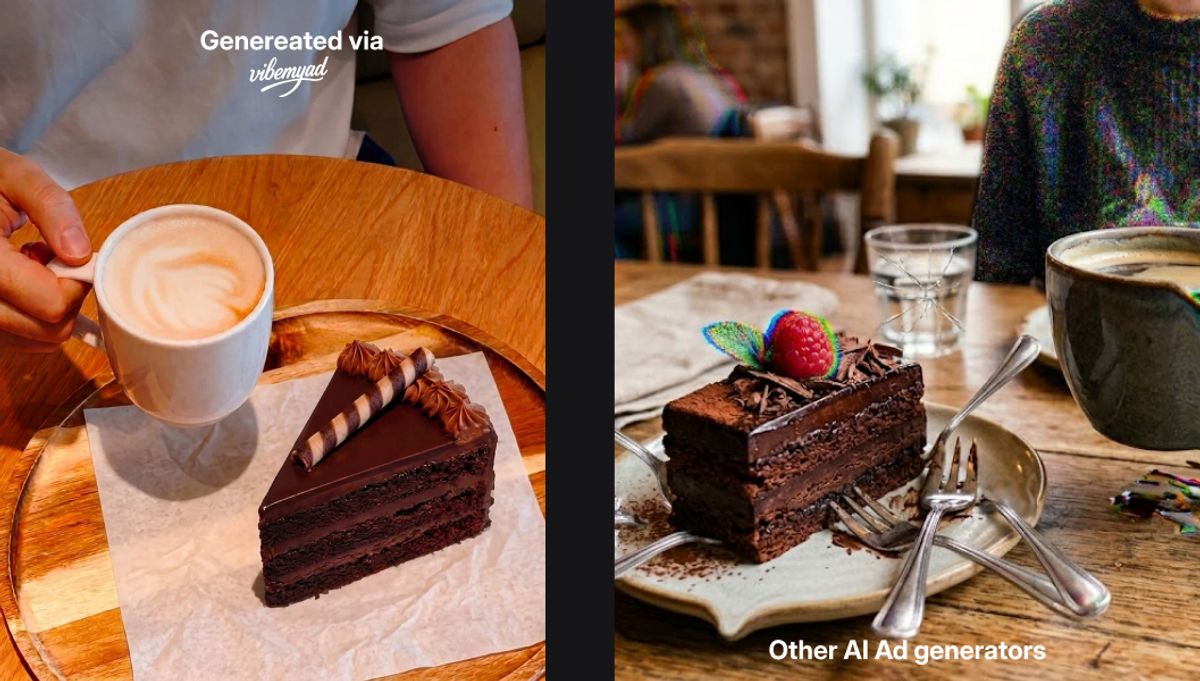

Comparison of generic AI ad output vs intelligence-first ad generated by Vibemyad Ad Gen.

Most people who try to generate ads with AI for the first time walk away disappointed. The output looks generic. The copy sounds like a press release. The visual has no connection to the brand's actual creative direction. They try a few prompts, get a few mediocre results, and conclude that AI ad generation is not ready yet.

The conclusion is understandable. But it is wrong about the reason.

The problem is not AI ad generation. The problem is where most AI ad generators start. A prompt is not a creative brief. It is a guess. And AI systems built to respond to guesses produce creative that reflects the statistical average of everything they have been trained on, which is exactly what generic looks like.

In 2026, there is a different approach available. It starts from what competitors have already proven works on Meta, uses that intelligence as the foundation for the brief, and produces creative that is differentiated from the category rather than averaged from it. This guide explains how it works, what it produces, and how to use Vibemyad Ad Gen to run the process from a single conversation.

Generating an ad with AI is the process of using artificial intelligence to produce advertising creative, including visual design, copy, and layout, without requiring manual design work for each individual output. In 2026, AI ad generation ranges from simple prompt-to-image tools that produce a single static visual to fully agentic systems where a conversational AI manages the entire creative workflow: research, briefing, concept development, and production.

The distinction that matters is not whether AI is involved. It is what the AI is working from. An AI ad generator working from a text prompt is producing creative based on a description. An AI ad generator working from validated competitor intelligence and live Meta ad data is producing creative based on evidence of what is already converting in the market.

These are not the same product. The output quality, brand relevance, and conversion potential are different by an order of magnitude, and the gap between them is the central story of AI ad generation in 2026.

Two years ago, generating an ad with AI meant using a tool to produce a rough visual from a text description and then spending time in a design tool making it usable. The AI was a starting point at best. A time saver at worst.

That is not what AI ad generation looks like in 2026. The technology has moved significantly. What is now possible, and what the best tools in the category are actually delivering, includes full static ad production from a conversation, brand-consistent outputs that use a brand's actual fonts and visual language, carousel formats built to platform specifications, and agentic systems that research the competitive landscape before a single creative decision is made.

Meta's own advertising ecosystem has evolved alongside this. The volume of creative brands need to remain competitive on Meta, across placements, audiences, and testing cycles, has made manual production a genuine bottleneck for most teams. Research published by Meta for Business indicates that advertisers who refresh creative regularly significantly outperform those running static campaigns, with creative quality identified as one of the primary drivers of ad performance at the auction level. AI ad generation in 2026 is not a novelty. For brands running paid Meta campaigns, it is becoming a production infrastructure decision.

The question is not whether to use AI for ad generation. It is which starting point the AI is working from, and how much of the creative process it can genuinely handle without manual intervention.

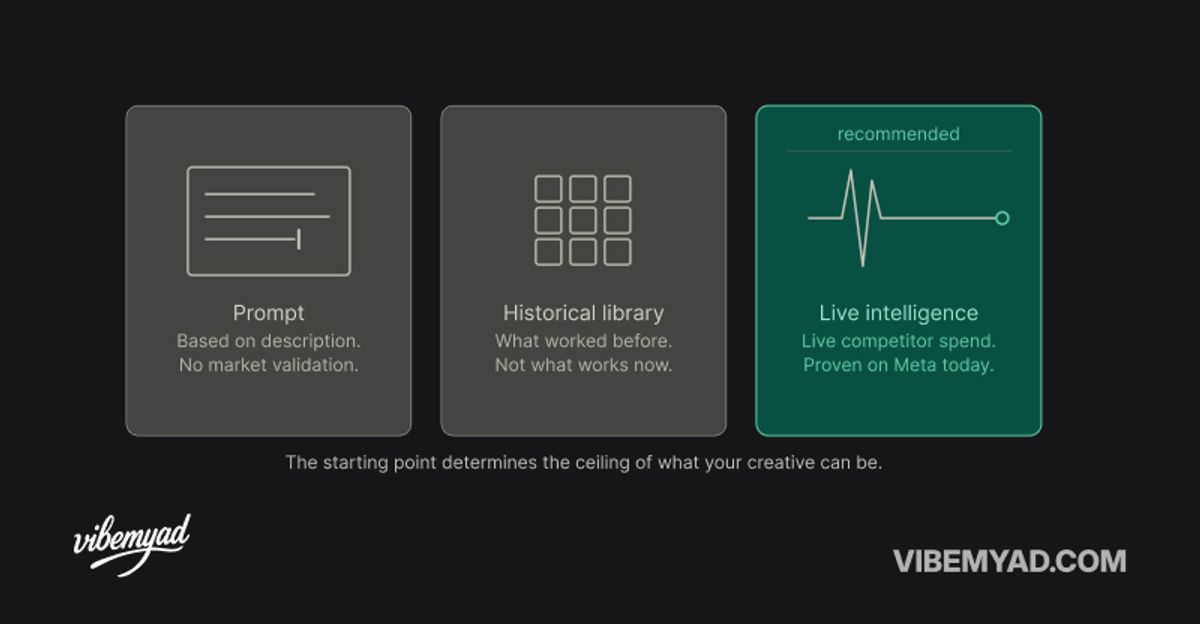

Three AI ad generation starting points compared - prompt, historical database, and live market intelligence

This is the part most guides on AI ad generation skip over, and it is the most important thing to understand before you generate a single creative.

Every AI ad generator has a starting point. That starting point determines the ceiling of what the output can be.

The most common starting point is a prompt. You describe what you want, the AI produces something that matches the description. The problem with prompts as a starting point is that a prompt is a hypothesis. You are describing what you think might work based on intuition, category knowledge, or what you have seen before. The AI has no way to tell you whether the creative direction you are describing has actually performed in your category on Meta. It can only produce what you asked for.

The second starting point is a creative template or historical ad database. Some tools let you browse a library of past ads, select a format, and generate a variation. This is better than a blank prompt because at least there is a reference point. But historical libraries have a fundamental problem: they show you what worked before. They cannot tell you what is working right now in your specific competitive landscape, against your specific competitor set, with your specific audience.

The third starting point, and the one that produces materially better output, is live market intelligence. Starting from what competitors are actively spending behind on Meta right now, filtered to your category, sorted by run duration as a performance proxy, gives the AI a creative foundation built from evidence rather than description or history.

This is what Vibemyad Ad Vault provides and what Vibemyad Ad Gen is built on top of. Vibemyad Ad Vault indexes over 10 million Meta ads continuously and surfaces the ones sustaining real spend in any given category. Vibemyad Ad Gen uses those ads as the starting point for creative generation. The brief is built from evidence. The output reflects what the market has already validated.

The difference in output quality between a prompt-first generator and an intelligence-first generator is not subtle. One is producing creative that looks like an average of all ads. The other is producing creative built from the specific ads that are currently winning in your category.

Definition: The Vibemyad ad generation workflow is a structured, step-by-step process that runs inside a single session at vibemyad.com/sessions. It moves from finding a validated competitor reference in Research Mode, to developing and approving creative concepts in Plan Mode, to producing finished Meta-ready ads with the agent building and self-reviewing each image layer by layer. Designers who prefer to skip the reference entirely can use Edit Mode to generate directly from a prompt.

Before any generation begins, add your brand book directly inside the Vibemyad agent. The brand book contains your logos, fonts, colours, and design guidelines. The agent does not use this as a finishing filter applied after the fact. It draws on the brand book actively while building every step of every image, which is what keeps output looking like it came from your brand rather than a generic template. Set this once and it carries across every session.

Go to Vibemyad Agent and select Research Mode. Type what you are looking for in plain language. "Bold ads for jewellery brands." "Minimalist skincare static ads." "Testimonial ads for supplements with a strong hook." The Vibemyad agent searches Vibemyad Ad Vault based on your description and surfaces matching reference ads. These are real Meta ads that have been running with real budget behind them, not stock images or training data examples. Review the results and select the reference image that best matches the creative direction you want to build from. That selection becomes the foundation for everything that follows.

Once a reference is selected, switch to Plan Mode. Choose how many variations you want to produce: 1, 2, or 3. The agent generates that number of creative concepts based on your selected reference and your brand book. Review each concept and approve or reject before any image is generated. Nothing goes to production until you confirm the direction. This is the step that prevents wasted generation cycles on a creative direction you did not actually want.

Once a concept is approved, the agent begins building the ad in layers. Step 1 is the base image. Step 2 is the hero element, which is your product, a model, or the primary visual subject of the ad. Step 3 is props and background elements. Step 4 is copy. Depending on the concept, the ad may be complete at Step 2 or Step 3 without needing every layer. At each step, the agent reviews its own output internally. If something does not pass that review, the agent retries automatically without flagging you. Only output that clears the agent's own review is shown.

Once the agent surfaces the finished creative, review it. If you want to adjust specific elements, switch to Edit Mode and make changes without restarting the full concept process. If a designer prefers to skip the reference entirely and start from scratch, Edit Mode is also where that happens: write a prompt directly, select Edit Mode, and generate without going through Research Mode first.

Most people's reference point for AI-generated ads is bad AI-generated ads. Generic visuals. Copy that sounds like a description of a product rather than a reason to want it. Layouts that look like they were built from a template nobody customised.

Good AI-generated ad output in 2026 looks different because it starts from a different brief.

When the creative foundation is a competitor ad that has been running for 90 days on Meta with sustained spend behind it, the structural choices in the output reflect a pattern the market has already validated. The hook technique is not a guess. The visual hierarchy is not a default. The call to action is positioned where the market has already shown it converts.

Across Vibemyad Ad Gen sessions, the outputs that perform best share three characteristics. The reference ad was selected based on run duration rather than visual appeal alone. The brand book was provided before generation rather than applied as an edit afterwards. And the plan was reviewed and confirmed in Plan Mode before production started, which means the agent had a clear, approved brief before a single pixel was rendered.

What this produces is a creative that looks like it came from a brand that studies the market, not a brand that typed something into a text box and published what came out. That distinction is visible in the output. It is also visible in performance data when the ad goes to Meta.

Example of a finished Meta ad generated by Vibemyad Ad Gen using a competitor reference and brand book inputs

Generating ads with AI in 2026 works when the AI is working from the right starting point. A prompt produces creative that reflects an average. A validated competitor reference produces creative that reflects what the market has already shown it responds to. The starting point is the decision that determines everything downstream.

The opportunity is not just speed. Manual ad production at the volume Meta campaigns require is a genuine production bottleneck for most teams. AI ad generation, when built on live market intelligence rather than generic training data, solves the bottleneck without introducing the brand consistency problems that plague most AI-generated creative at scale.

The workflow inside Vibemyad Ad Gen is the clearest example of what intelligence-first generation looks like in practice. Research Mode, Plan Mode, and Edit Mode each serve a distinct job in a single session at vibemyad.com/sessions. The brand book ensures every output is on-brand at the generation stage, not as a fix applied afterwards. The competitor references from Research Mode ensure every output is grounded in what the market has already validated. The result is a creative production system, not just a tool.

Get notified when new insights, case studies, and trends go live — no clutter, just creativity.

Table of Contents

Arpita Mahato

Content Writer, Vibemyad

Arpita Mahato

Content Writer, Vibemyad

Arpita Mahato

Content Writer, Vibemyad