February 23, 2026 • 9 min read

February 23, 2026 • 9 min read

This blog breaks down what creative testing really means in performance marketing and why it drives up to 80% of ad results. You’ll learn the structured framework high-performance teams use to improve CTR, lower CPA, and protect ROAS, the metrics that matter at each funnel stage, the hidden risks of creative fatigue and saturation, and how the shift from testing to creative intelligence is redefining competitive advantage.

Creative testing is a structured, hypothesis-driven process used to evaluate specific ad variables to determine which elements materially impact performance. Through controlled experimentation using A/B testing and multivariate testing, teams measure outcomes across key performance metrics like click-through rate (CTR), conversion rate, cost per acquisition (CPA), engagement time, and return on ad spend (ROAS).

The reason creative testing matters is structural. Industry data by the Association of National Advertisers shows that 70-80% of ad performance is driven by creative execution, not targeting adjustments or bid optimization. As algorithmic media buying standardizes performance infrastructure across platforms, creative becomes the primary differentiator.

This article outlines the structured framework high-performance teams use to move from random experimentation to systematic creative optimization, turning every test into a scalable insight instead of trial-and-error noise.

Creative testing is a structured, hypothesis-driven process for isolating and evaluating specific creative variables, such as hooks, visuals, messaging angles, formats, and CTAs, to determine their measurable impact on performance outcomes like click-through rate (CTR), cost per acquisition (CPA), conversion rate, and return on ad spend (ROAS).

Rather than launching multiple ads and hoping one performs, ad creative testing creates controlled experiments that turn creative decisions into data-backed insights.

To understand it fully, break creative testing into four core components:

The objective of creative testing is not simply to “find a winner.” It is to validate or invalidate a clear hypothesis about which creative element influences performance. For performance marketing teams, objectives typically include:

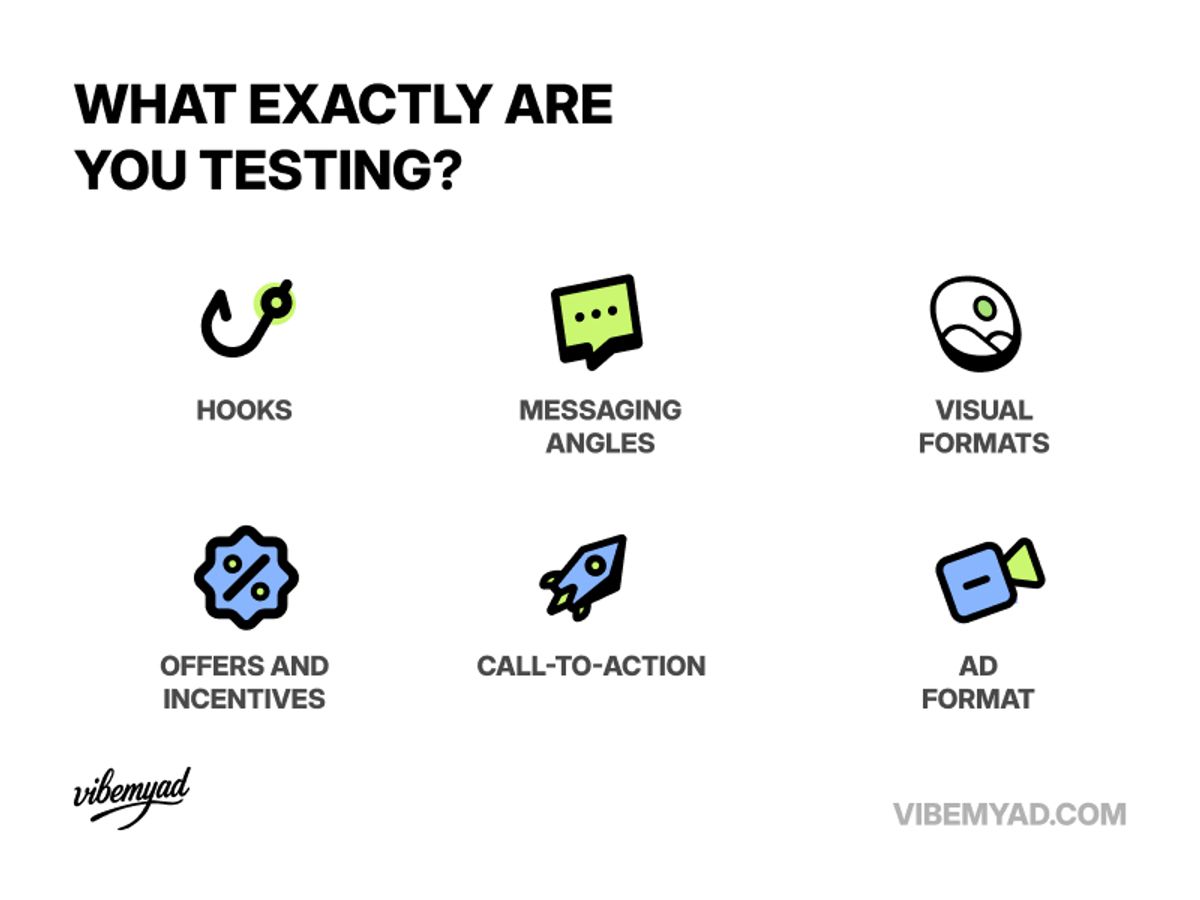

What are you testing?

Effective creative testing isolates variables to understand causality. Instead of changing everything at once, teams modify one controlled element at a time. Common creative variables include:

Isolating variables ensures performance changes can be attributed to specific creative decisions rather than noise.

Creative testing relies on performance metrics aligned to the funnel stage and campaign goals. Top-of-funnel indicators:

Mid- to bottom-funnel indicators:

In modern performance marketing, media buying infrastructure has largely been standardized. Platforms optimize bids automatically. Targeting has converged. Audiences overlap. What remains as the decisive competitive variable is creative.

Advanced targeting was once a defensible edge. Today, machine learning systems on platforms like Meta and Google automatically optimize distribution at scale.

The result? Audience overlap is high. Lookalikes are mature. Interest targeting has narrowed. Placements self-adjust. When targeting becomes commoditized, differentiation shifts to creative.

Automated bidding and delivery systems have reduced the marginal advantage of manual optimization. Media buyers can refine structure, but the performance delta is smaller than it once was.

Creative testing restores leverage. Algorithms prioritize engagement signals. Stronger creative improves CTR, feeds better data into the system, and ultimately improves CPA and ROAS. In algorithm-driven ecosystems, creative quality compounds.

When targeting and bidding normalize, creative becomes the scalable growth engine. Structured creative testing allows performance marketing teams to:

Even high-performing ads decay due to creative fatigue, and performance drops as frequency rises. Warning signs include:

Without ongoing testing, teams overscale winners until efficiency collapses. By the time fatigue is visible, budget waste has already accumulated.

A disciplined creative testing framework enables proactive rotation, early saturation detection, and sustained performance stability. To learn more about the creative testing framework, you can also visit the AI-powered Creative Intelligence platform, Vibemyad, and see how A/B testing of creative gives you better results.

Creative testing shouldn’t feel like throwing variations into the algorithm and hoping something sticks. High-performance teams treat it as a disciplined process. Here’s the framework that separates guesswork from scalable results.

Start with a gap, not guess

Before producing new creatives, ask: Where exactly is performance breaking?

Creative testing should begin with diagnosis. If you don’t know which layer is underperforming, hook, message, format, or offer, you’ll end up testing everything and learning nothing.

Form a clear hypothesis

Every test needs a reason. Instead of “let’s try something new,” define: If we change this variable, we expect this metric to move because of this audience insight.

For example, if we shift from feature-led copy to outcome-led messaging, CTR should improve because users respond more to tangible results than to product specs. A strong hypothesis protects your budget. It forces strategic thinking before execution.

Isolate One Variable at a Time

The fastest way to confuse performance data is to change five things at once. Test one dimension per round:

When you isolate variables, you understand causality and correlation. That clarity compounds over time.

Choose the Right Testing Structure

Align KPIs Before You Launch

Creative testing fails when teams chase the wrong metric.

Define your primary KPI and your guardrails before the campaign goes live. Otherwise, you’ll crown the wrong winner.

Analyze, Learn, Iterate

Once the data reaches statistical confidence:

Document the learning. Scale deliberately. Then feed that insight into the next test. Creative testing is not about finding one winning ad. It’s about building a system that continuously improves CTR, lowers CPA, protects ROAS, and prevents creative fatigue.

Yes, and most teams don’t realize it until ROAS starts compressing. You may be running structured creative testing. You may be rotating assets regularly. Yet CTR declines, CPA increases, and performance plateaus. The issue may not be creative fatigue. It may be market-wide saturation. Here are some of them:

Creative testing is evolving. What began as structured A/B experimentation is becoming an intelligence infrastructure. The shift is clear: from measuring performance after launch to predicting performance before scale. Here’s what defines the next phase.

Automatically classifies hooks, messaging angles, visuals, and emotional triggers to turn creative data into structured, actionable insights.

Identifies which hook types sustain CTR and engagement over time, and which decay quickly.

Flags when specific formats or messaging patterns are becoming overused across competitors.

Uses historical performance data to forecast which creative variations are most likely to improve CPA and ROAS before scaling.

Continuously monitors competitor creatives to map messaging shifts, trend adoption, and emerging whitespace.

In a market where targeting and bidding are increasingly automated, creativity becomes the primary lever of growth. The teams that win are not those who produce more ads, but those who learn faster, test smarter, research-backed, and evolve from isolated experiments to structured creative intelligence.

AI-powered creative intelligence platforms like Vibemyad represent this evolution, combining structured testing insights with competitive ad analysis, saturation detection, and predictive modeling.

Get notified when new insights, case studies, and trends go live — no clutter, just creativity.

Table of Contents

Arpita Mahato

Content Writer, Vibemyad

Rahul Mondal

Product, Design and Co-founder, Vibemyad

Rahul Mondal

Product, Design and Co-founder, Vibemyad